The Lyceum: AI Daily — Mar 13, 2026

Photo: lyceumnews.com

Friday, March 13, 2026

The Big Picture

AI agents stopped being demos this week and started being products — on your phone, on your desk, and in police stations where the consequences are irreversible. The gap between "AI can do this" and "AI is doing this right now, to real people" collapsed in about 24 hours, producing both a genuinely useful phone feature and Angela Lipps, a Tennessee grandmother, who lost six months of her life. That tension is the story.

Today's Stories

Your Phone Just Got a Real AI Agent — Not a Chatbot

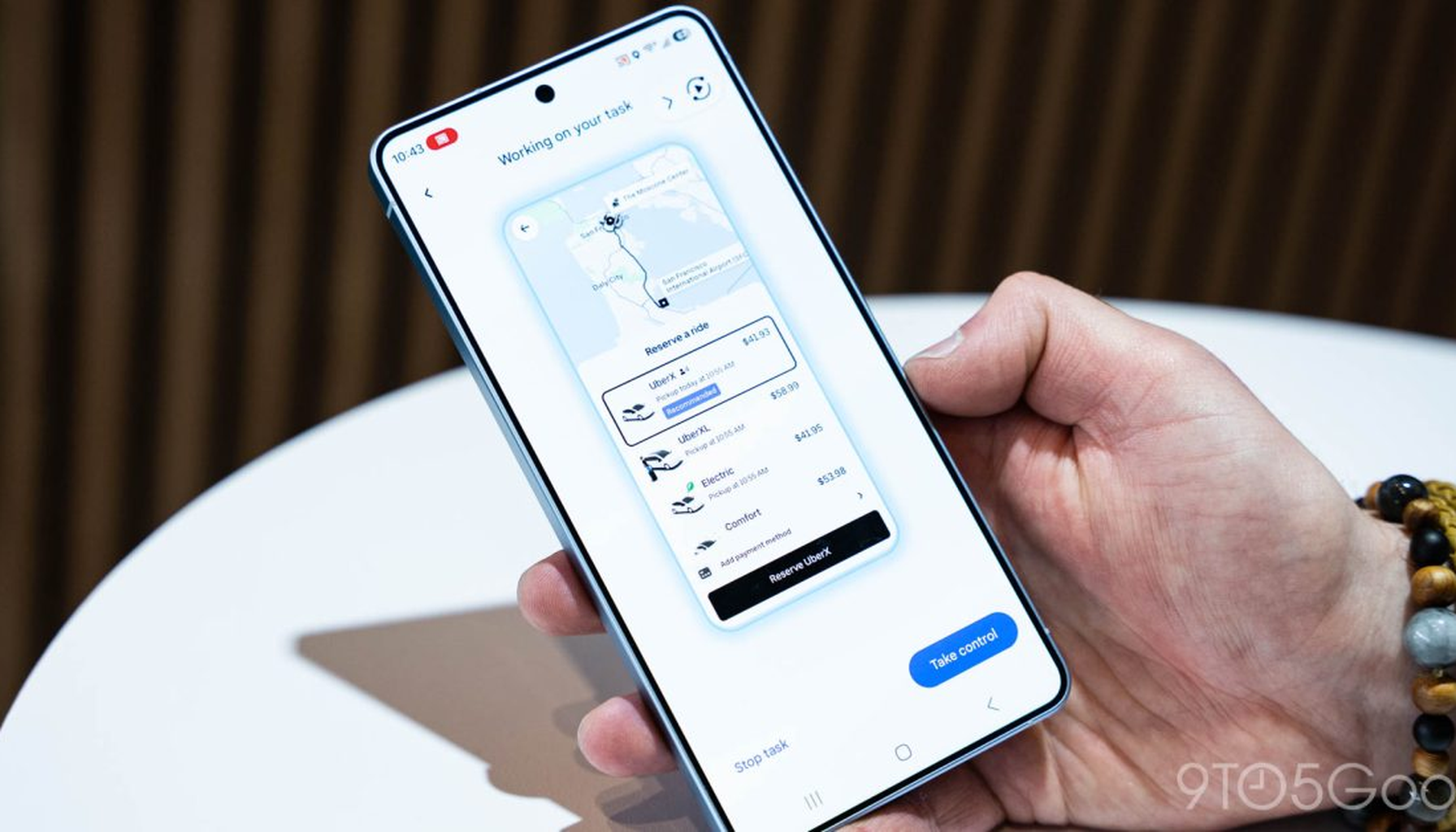

The thing everyone promised about AI assistants — that they'd actually do stuff, not just answer questions — went live on mainstream hardware last night. Gemini's task automation is now rolling out on Galaxy S26 phones, and it works like this: say "Get me a ride to the airport," and Gemini opens Uber or Lyft, fills in your details, taps through screens, and builds the whole request. It operates apps in a sandboxed virtual window, clicking around like a human would — not calling hidden APIs. Right now it works with Lyft, Uber, Grubhub, DoorDash, Uber Eats, and Starbucks.

The crucial design choice: Gemini won't press "pay." It assembles everything, then hands the final confirmation back to you. That's not a bug — it's a deliberate safety line that will likely become the industry default. Early testers on r/singularity are calling it "wild" and sharing clips of multi-step workflows running autonomously. Pixel 10 support is coming but isn't live yet. This is the first time a phone AI is operating apps at scale in production for tens of millions of people — not in a lab, not in a limited beta. Apple's equivalent Siri upgrade keeps slipping. Watch whether Gemini expands to calendar and email apps next; if it does, the agentic phone race is effectively over before Apple starts.

A Grandmother Spent Six Months in Jail Because an AI Got It Wrong

This is the story blowing up on Hacker News today, and it deserves every bit of attention it's getting. Angela Lipps, a Tennessee grandmother, spent nearly six months in jail after Fargo police misidentified her through facial recognition software in a bank fraud case. U.S. Marshals arrested her at gunpoint. No one from the Fargo Police Department ever called to question her first. Her bank records proved she was 1,200 miles away in Tennessee the entire time. She lost her home, her car, and her dog before charges were finally dismissed.

This is reportedly the eighth documented wrongful arrest involving facial recognition in the United States, and the pattern is always the same: software flags a match, a detective confirms it visually, nobody picks up the phone. The AI got used as a conclusion, not a lead. The Guardian's coverage puts the human cost in sharp relief. Expect lawsuits, new rules on "AI evidence," and renewed scrutiny of police tech contracts — amid concern that once a model's output enters a bureaucratic pipeline, it hardens into truth unless processes are designed to prevent that.

Perplexity Wants an AI Running on Your Desk 24/7

Perplexity used its first developer conference to announce "Personal Computer" — software that turns a dedicated Mac mini into an always-on AI agent connected to your local files and Perplexity's cloud servers. Instead of issuing specific instructions to multiple AI tools, you describe the outcome you want, and the system breaks it into subtasks and delegates to sub-agents. You're talking to a project manager who assigns work to a team.

CEO Aravind Srinivas framed it sharply: "A traditional operating system takes instructions; an AI operating system takes objectives." Access requires the Perplexity Max subscription at $200/month. This is Perplexity's answer to an existential question — a company without its own frontier models needs to justify why you'd pay it rather than going directly to OpenAI, Anthropic, or Google. A practical wrinkle: developers are already hitting quota and billing errors from Perplexity's APIs, the kind of operational friction that determines whether a promising product becomes a daily tool or an abandoned experiment.

A 9B Open-Source Coding Agent Trained on Frontier Model Behavior Is Beating Much Bigger Models

A small team called Tesslate quietly released OmniCoder-9B — a 9-billion-parameter coding model fine-tuned on over 425,000 "agentic coding trajectories," which are recordings of frontier models like Claude Opus 4.6 and GPT-5.x actually solving real software tasks with tools, terminals, and multi-step reasoning. The model fits on a single 32GB consumer GPU even with a 262,000-token context window. Early community benchmarks suggest it rivals or beats much larger models on repo-level coding tasks, and Hugging Face posts report GPQA Diamond scores above GPT-OSS-120B.

The key insight: learning from agents doing work may matter more than scaling raw internet data. Rather than making the model bigger, Tesslate scaled the quality of behavioral data — training on real tool calls, real error recovery, real edit diffs. These are self-reported benchmarks without third-party verification, so treat the numbers as promising-not-confirmed. But the approach has serious practitioner interest, it's Apache 2.0 licensed, and an accompanying preprint is already circulating. For startups and lean teams, a compact coding agent that runs locally could redefine development velocity.

ByteDance Quietly Assembles Nvidia Mega-Clusters Outside China

The Wall Street Journal reports ByteDance is building large GPU clusters in data centers outside mainland China to access top Nvidia chips while technically staying within U.S. export-control rules. That lets ByteDance train or fine-tune large models for global products even as American policy tries to restrict chip flows to Chinese companies. This is a reminder that the next chapter of the AI race is about where you can build capacity, not just who has the best models — and expect regulators to focus more on offshore cluster arrangements and the enforcement headaches they create.

⚡ What Most People Missed

On March 12, 2026, the Washington State Legislature gave final passage (floor votes in both the House and the Senate) to HB 1170 (AI disclosure), HB 2225 (chatbot safety for children), and SB 5395 (AI in health insurance decisions); official vote counts were not yet posted. Three bills with real teeth in one evening. State-level AI regulation is moving faster than anything in D.C., and most national coverage missed it entirely.

Sam Altman said the quiet part loud. The quote circulating on Reddit — that OpenAI sees "a future where intelligence is a utility, like electricity or water, and people buy it from us on a meter" — is generating real backlash. The CEO of a company that started as an open nonprofit safety project is now casually pitching pay-per-thought, and it's landing in a country where polling (March 2026 poll) already shows AI is one of America's least-liked topics.

Qwen3.5-9B is quietly becoming the default local agent brain. Multiple practitioners on r/LocalLLaMA report that this relatively small open model is "shockingly good" for agentic coding on a 12GB GPU — reliably orchestrating editor, shell, and browser actions for real projects. Its rising adoption, together with models like OmniCoder-9B, suggests that interoperability standards, tool-harness APIs, and shared runtimes will determine which local-agent ecosystems thrive.

Kotlin's creator launched a language for talking to AI in specs, not English. Andrey Breslav's CodeSpeak is a formal specification language designed to replace ambiguous prompts with contract-like syntax. The bet is that programming becomes about describing intent with precision, and an LLM compiles that into traditional code.

📅 What to Watch

- If Gemini's automation expands to calendar and email apps in the next few weeks, Apple's Siri relaunch goes from "delayed" to "irrelevant" — and the definition of what a phone assistant is changes permanently.

- If more states follow Washington's three-bill sprint, companies will be forced to implement state-by-state feature flags, geo-fencing, and separate legal wrappers for services, raising engineering and legal costs and creating divergent product behavior across U.S. users.

- If OmniCoder-style fine-tunes keep outperforming brute-force scale, the economics of software development shift toward tiny local models — and the cloud API pricing moats that fund frontier labs start leaking.

- If regulators tighten rules on offshore GPU clusters, the AI race pivots from "who has the best models" to "who controls the power plants," and ByteDance's workaround becomes the template everyone copies or the precedent everyone prosecutes.

- If U.S. cities move to restrict facial recognition after the Fargo case, municipalities may cancel or renegotiate contracts, trigger clawbacks in vendor liabilities, and lead insurers to raise premiums for police-tech deployments.

The Closer

A phone that orders your coffee without asking, a grandmother who lost her dog because software said she was someone else, and a 9-billion-parameter model that learned to code by watching the big kids work. Sam Altman wants to sell you intelligence on a meter — which is a bold pitch from a man whose company's original thesis was that intelligence should be free. Stay sharp out there.

If someone you know works in AI and still isn't reading this, fix that — forward it along.