The Lyceum: AI Weekly — Mar 12, 2026

Photo: lyceumnews.com

Week of March 12, 2026

The Big Picture

AI went to war this week — not metaphorically, but literally, planning airstrikes in Iran while Anthropic sued the Pentagon to reverse its blacklisting. Meanwhile, Nvidia announced it wants to compete with its own customers, a senator proposed pausing new data-center construction, and hospital paperwork became the quietest revolution in healthcare. The AI industry just discovered it can't be apolitical anymore, and the world is discovering it can't opt out of AI.

This Week's Stories

The AI Behind the Iran Strikes — and the Company That Still Objects

Here is a sentence that should not be possible: the U.S. military is using an AI system built by a company it has blacklisted and that is suing the Pentagon — to help plan airstrikes in an active war.

In the first eleven days of Operation Epic Fury, AI-enabled targeting helped accelerate 5,500 U.S. strikes on Iran. Two sources confirmed the military is running AI systems from Palantir that rely in part on Anthropic's Claude model to identify potential targets, rank them, and assist with post-strike damage assessment — the kind of end-to-end targeting pipeline that makes the phrase "human in the loop" do a lot of heavy lifting. The head of U.S. Central Command said publicly that the military is using "advanced AI tools" to make decisions "faster than the enemy can react."

The strangest wrinkle: the Pentagon has classified Anthropic as a "supply chain risk" because of the restrictions the company places on military use of its models — yet continues using Claude because it considers it superior to alternatives. Anthropic is now suing the government to reverse its blacklisting while its technology runs inside active combat operations. OpenAI, by contrast, stepped in to fill the gap, signaling it will observe legal limits but not ethical ones — a distinction that has drawn concern from some corporate buyers.

Then the week got darker. A Tomahawk strike on a primary school in Minab reportedly killed 165 people. When Futurism asked the Pentagon whether AI was involved in targeting the school, the outlet was referred to CENTCOM, which said: "We have nothing for you on this at this time."

This is AI's most consequential battlefield deployment to date. What to watch: whether members of Congress request formal, committee-level oversight hearings (for example, before the House Permanent Select Committee on Intelligence or the Senate Armed Services Committee) — or whether this, like drone warfare before it, stays classified for a decade. The public conversation is already moving faster than the official one.

Nvidia Just Decided It Wants to Be an AI Lab, Too

You know Nvidia as the company that makes the chips powering every AI model you've ever used. This week, it announced it also wants to make the models.

According to financial filings confirmed by company executives to Wired, Nvidia plans to invest roughly $26 billion over five years to build "open-weight" AI models — meaning the trained parameters (the learned knowledge inside the AI) get released publicly, so anyone can download, modify, and run them. Think of it as publishing your recipe rather than just selling the dish.

This puts the GPU king in direct competition with OpenAI, Anthropic, and DeepSeek — companies that have been Nvidia's biggest customers. For scale: OpenAI reportedly spent around $3 billion training GPT-4. Nvidia could feasibly develop multiple frontier models with budget to spare.

Coinciding with the announcement, Nvidia unveiled Nemotron 3 Super, a roughly 128-billion-parameter open-weight model built on a novel architecture called LatentMoE — a "mixture of experts" design that activates only a fraction of the model's capacity for any given task, prioritizing accuracy per unit of computation. Nvidia says it outperforms OpenAI's GPT-OSS on several benchmarks, though those claims are self-reported. Independent evaluations should arrive within days.

The strategic logic is elegant and ruthless: if Nvidia builds open models optimized to run best on Nvidia chips, developers have even less reason to switch hardware. Jack Dorsey called the move "excellent." The open-source community is thrilled because it could materially lower the barrier for smaller labs and independent researchers. Nvidia's GTC conference starts March 16 — expect details.

The Senator Who Wants to Pull the Plug on AI's Power Grid

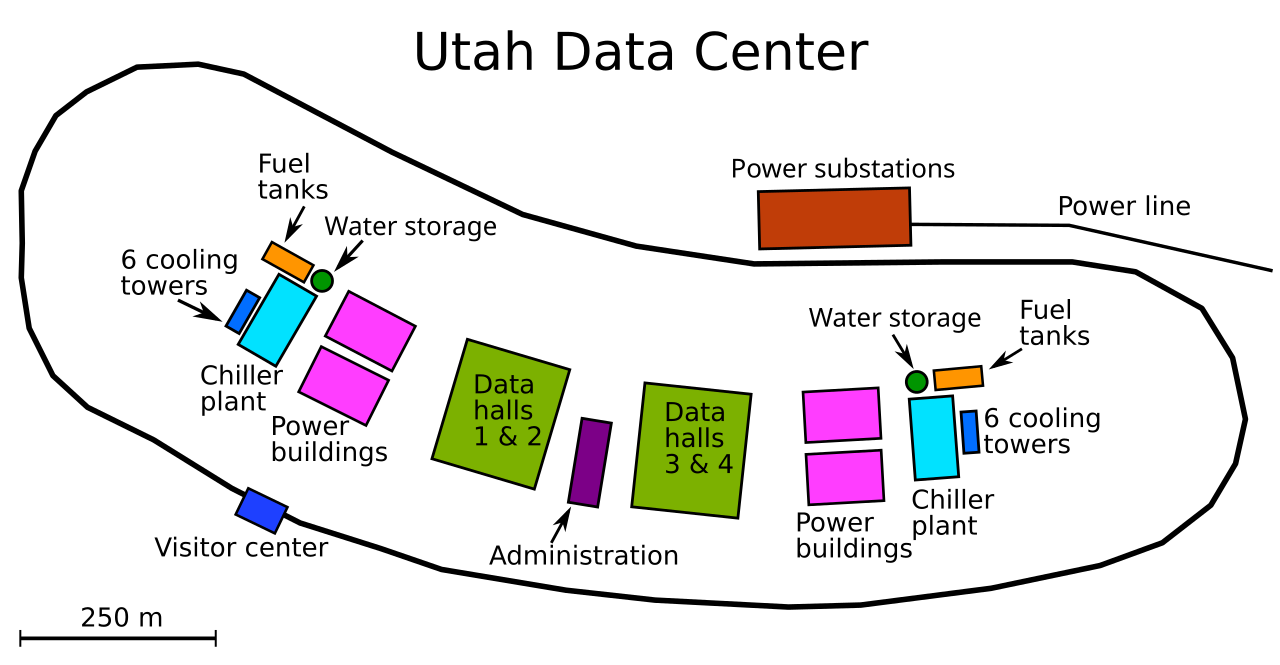

While tech companies write hundred-billion-dollar checks for new data centers — the server-packed buildings where AI models live and think — Senator Bernie Sanders this week proposed a nationwide moratorium on new AI-specific data center construction.

Sanders called AI and robotics "the most profound technological revolution in world history" and pointed to estimates that nearly 100 million U.S. jobs could be displaced over the next decade. His proposal would impose a nationwide pause on new AI-specific data center construction — just as Amazon, Microsoft, Alphabet, and Meta are collectively ramping toward nearly $700 billion in AI capital expenditures.

The proposal has essentially no chance in the current Congress. But the politics underneath it are real. A Stateline analysis found lawmakers in at least eleven states have proposed temporary moratoria or stricter rules on new data centers, citing grid strain, water use, and tax giveaways. Politico reported the moratorium idea is gaining traction among Hill progressives. When Sanders and Ron DeSantis find themselves on the same side of an issue, something is happening in the electorate.

Watch whether this becomes a decentralized, state-by-state fight — which would be harder for the industry to lobby against than a single federal policy.

AI Agents Are Quietly Taking Over Hospital Paperwork

Nobody held a press conference. There was no viral demo. But at HIMSS 2026 — the giant annual healthcare IT conference — something significant happened: AI agents running in the background of hospital operations graduated from "pilot program" to "this is just how we work now."

Major vendors including Epic, Oracle Health, FinThrive, and Innovaccer showed live deployments — not demos — of AI agents handling prior authorizations (the insurance approval process that delays patient care for days), discharge summaries, clinical documentation, and medical coding. The bottleneck in most hospitals isn't doctors making decisions; it's paperwork. An enormous amount of clinical time vanishes into forms, codes, and approvals that exist for billing and insurance rather than patient care.

What's different from a year ago: the agents aren't just drafting text for a human to review. They're completing full workflows end-to-end, with humans reviewing exceptions rather than every output. That's a different category of automation — and it means fewer medical-records and administrative staff jobs exist than a year ago, though vendors at the conference were careful not to frame it that way.

Two under-remarked points from the conference floor: privacy concerns are starting to bubble up even where vendors emphasize utility, and some clinicians privately reported that early deployments actually increase work for frontline staff because they must audit and correct agent outputs before those systems are trusted — a classic "reliability tax" on early adopters.

A $450 Million Bet That Robots Can Handle Real Life

The humanoid robot demos have gotten very impressive. They've also mostly been impressive in controlled environments — warehouses with consistent lighting, factory floors with known layouts, labs with known props.

Rhoda AI emerged from stealth this week with a $450 million Series A — one of the largest first fundraises in robotics history — specifically to solve the gap between demo and reality. The company's thesis: prior robotics efforts have been too hardware-focused, and the real breakthrough is perception and decision-making software that lets a robot handle environments it has never seen before. Target domains include emergency response, elder care, and unpredictable household environments.

The timing is pointed. This same week, Figure released a video of its Helix 02 robot cleaning a living room autonomously — wiping tables, moving objects, arranging cushions — in what the company called "fully autonomous mode." It's impressive, and it also shows exactly how curated the environment still was. Rhoda is betting $450 million that the messy living room — the actually messy one — is the harder and more valuable problem. The investor list included firms tied to infrastructure and defense, which suggests early customers may be in those sectors.

New Products & Launches

Nemotron 3 Super — Nvidia's most advanced open-weight model, a ~128B-parameter mixture-of-experts system with million-token context windows, explicitly positioned for "agentic" tasks (multi-step, autonomous workflows). Available for download; independent benchmarks pending.

Helix 02 — Figure's latest humanoid robot, demonstrated performing a full sequence of household tasks without human intervention. A step toward real-world autonomy, though the demo environment was notably tidy.

ntransformer — An open-source project that runs a 70-billion-parameter model on a single consumer RTX 3090 by streaming data from fast NVMe storage directly to the GPU, bypassing CPU/memory bottlenecks. Previously server-only models, now accessible to hobbyists with technical skill and an $800 graphics card.

LocalGPT — A small, Rust-based AI agent that runs entirely offline, stores its memory in local plain-text files, and never touches a server. Early traction on GitHub and Hacker News suggests real demand for privacy-first, local-only AI tools.

⚡ What Most People Missed

- The developer identity crisis is getting louder. Spotify's co-CEO said on an earnings call that top developers haven't drafted "a single line of code" since December — they supervise AI that generates it. A Harvard study of 62 million workers (2026 study) found that when companies adopt generative AI, junior developer employment drops ~9–10% within six quarters while senior employment barely budges. The ladder is disappearing from the bottom up, and nobody's sure what replaces it.

- The ex-Manus engineer who quit using function calling. A post from Manus's former backend lead hit 1,000+ upvotes on r/LocalLLaMA this week, arguing that production agent builders should abandon function calling (the standard method for telling AI which tool to use) in favor of treating the file system as the agent's permanent memory. At Manus, the average input-to-output token ratio runs 100:1 — which means the real engineering challenge is managing what the model sees, not what it does.

- The FTC quietly redrew the lines on AI liability. While everyone watched the Pentagon-Anthropic drama, the Federal Trade Commission shifted how it applies consumer protection law to AI. The agency has moved from assessing tools for what they could do wrong to focusing on what they actually did wrong. A formal policy statement on how Section 5 applies to AI was expected in Q1 2026 — days away.

- LLMs may have a Dunning–Kruger problem. A new arXiv preprint found that several popular models are most overconfident precisely on problems where they perform worst — echoing the human bias where beginners are most sure of themselves. A companion paper found high-quality models still fabricating facts on textbook medical questions. Models not only make things up — they often sound most certain when they're wrong.

- Yann LeCun's billion-dollar alternative bet is getting its lab. Meta's chief AI scientist — the most prominent critic of the "just scale it up" approach — closed a $1 billion raise to build a research institute pursuing a fundamentally different path. His argument: large language models can't reason the way humans do because they lack a model of physical reality. Whether he's right won't be clear for years, but a competing thesis is now officially funded.

📅 What to Watch

- If a judge grants Anthropic a preliminary injunction restoring its Pentagon contracts before month's end, it could prompt an industry-wide renegotiation of where AI safety policies end and government authority begins — a precedent every AI company will have to consider.

- If the Senate Armed Services Committee or the House Permanent Select Committee on Intelligence convenes oversight hearings on AI targeting in Iran, it will be the first formal committee-level government examination of AI in active U.S. combat operations — and the first time the "human in the loop" claim gets tested under oath.

- If independent benchmarks from Hugging Face's Open LLM Leaderboard confirm Nvidia's self-reported Nemotron 3 Super numbers, the open-weight competitive landscape could shift materially — increasing pressure on competitors to optimize for alternative hardware or to invest in software that runs efficiently off-Nvidia.

- If Republican co-sponsors appear on Sanders's proposed data-center moratorium, the proposal could move from progressive messaging to a genuine legislative threat that reshapes where hyperscalers build and accelerates the push toward edge computing and local AI.

- If the FTC's formal Section 5 AI policy statement drops this month as expected, companies may change compliance practices and contract language to reflect a new enforcement posture under existing law.

A senator and a governor from opposite ends of American politics agreeing that AI uses too much electricity. A robot that can fold a throw pillow but only if you place it on the right couch. An AI model that's most confident when it's most wrong — deployed, this week, to select bombing targets.

Somewhere, a hospital billing agent is quietly filing its three-thousandth insurance form tonight, and nobody's writing a think piece about it. That's how you know it's working.

Until next week. —The Lyceum